Performance evaluation of generative pre-trained transformer on the National Veterinary Licensing Examination in Japan

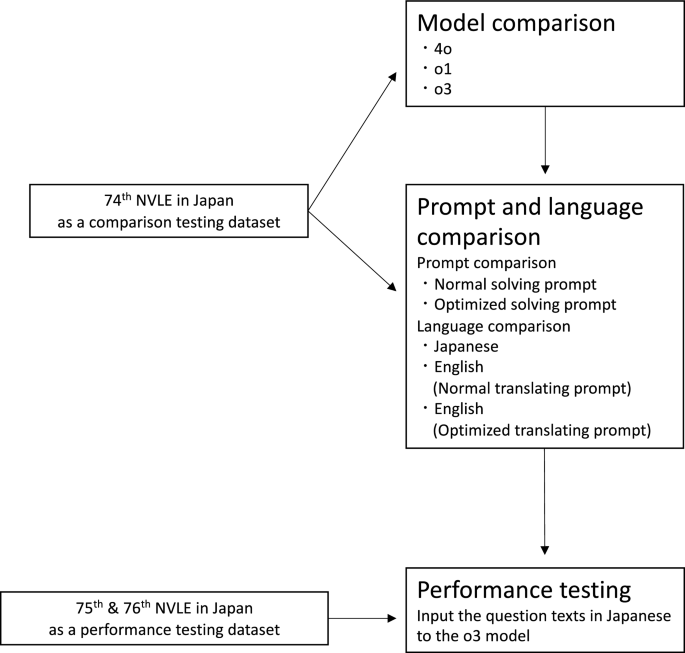

In this study, we evaluated the performance of GPT models on the NVLE in Japan. In the model comparison tests using the 74th NVLE, all tested conditions except for GPT-4o in Section C exceeded the minimum passing scoring rate, and o1 and o3 outperformed GPT-4o. In the prompt and language comparison tests with o3, there was no significant difference in performance attributable to prompt formats or the language. Furthermore, in the validation tests using the 75th and 76th NVLE, o3 achieved scores well above the minimum passing scoring rate across all sections with the Normal solving prompt and the original Japanese questions.

In the model comparison tests, GPT-4o did not meet the minimum passing scoring rate for Section C, whereas o1 and o3 surpassed the minimum passing scoring rate in all sections. Notably, o1 and o3 demonstrated significantly improved performance compared to GPT-4o across most sections. O1 and o3 models have more sophisticated reasoning ability than GPT-4o19,27,28. This improvement in the reasoning ability is likely attributable to the better performance of o1 and o3 on the NVLE in Japan. However, no significant difference was observed between o1 and o3, which implies that the ability to answer the questions of degree-level veterinary medical examinations may have reached a ceiling.

Regarding prompt and language comparisons, o3 consistently exceeded the minimum passing scoring rate without requiring elaborate prompt engineering or translation into English. A recent study has reported that translating Japanese NMLE questions into English improved GPT-4o’s performance24. One possible explanation provided in the study is that the primary training data are mainly from English-language websites29, which may therefore enable GPT to understand English better than Japanese30. In contrast, our findings did not demonstrate improvements when translating the questions into English, indicating that GPT’s ability to comprehend Japanese has advanced to a level comparable to its ability to understand English, at least in the context of simple veterinary medical questions. These findings highlight the potential of GPT for more direct application in the veterinary medical field through the Japanese language.

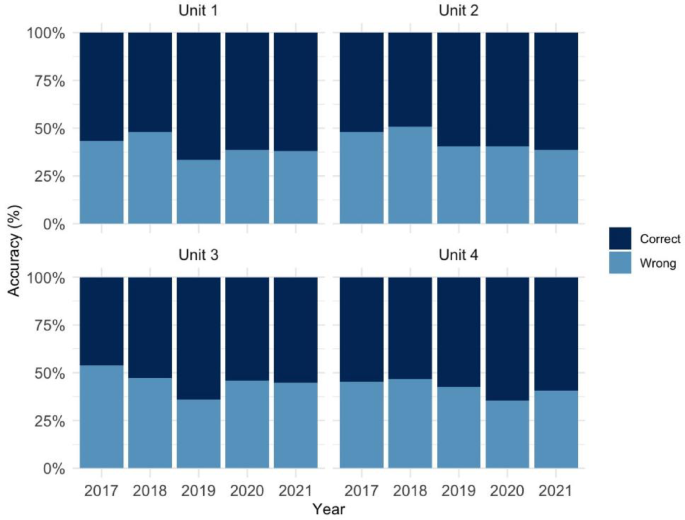

All models showed the noticeable decline in accuracy on image-based questions in Sections C and D. This trend was consistent with a previous report evaluating the performance of LLMs in the veterinary field 31 and may reflect insufficient domain-specific training of current ViT-based image encoders on veterinary medical images. However, despite the difficulty in capturing information from domain-specific images, o1 and o3 demonstrated superior performance on the image-based questions in Sections C and D compared to GPT-4o. This result would reflect the improvement of image recognition and integration of visual and textual reasoning18,19,27. Notably, the score of GPT-4o for Section C fell below the minimum passing scoring rate, consistent with findings from the prior study of GPT-4o on the NMLE in Japan, in which GPT-4o also underperformed on the image-based questions compared with text-based questions. However, in our study, the score for Section C was much lower than that for image-based questions in the prior study on the NMLE in Japan, with a correct answer rate of 55% compared to 68.7% in the prior study32. Moreover, GPT-4o achieved a higher score in the previous study, even when solving image-based questions without access to images, compared to its performance on Section C with images in our study24. These findings suggest that the image-based questions in the NMLE in Japan were less reliant on visual information and could often be solved using textual context alone. In contrast, the NVLE in Japan, particularly Section C, relied so heavily on image information that the model’s image recognition capability directly influences performance. Compared to Section C, Section D includes extensive textual supplementary information, which likely contributed to GPT’s higher performance observed in Section D compared to Section C. Overall, o1 and o3 were able to achieve passing performance on the NVLE in Japan, which includes the highly image-dependent section, underscoring their enhanced capacity for visual-textual reasoning.

In the validation tests using the 75th and 76th NVLEs in Japan, o3 achieved the minimum passing scoring rate across all sections under the conditions of the Normal prompt with the original Japanese questions, recording accuracy rates of approximately 80% for Section C and over 90% for the other sections. Previous studies evaluating GPT-4o on the NMLE in Japan reported accuracy rates of around 80% for essential questions and about 70% for the other sections24,32. Furthermore, studies conducted on the performance of GPT-4o on NMLE in the US and China showed lower scores than those observed in this study22,33. Although we cannot directly compare the results of different examinations, o3 nevertheless achieved substantially higher scores than previously reported GPT models. In addition, because there are currently no published studies evaluating the performance of o3 on either the NVLE or the NMLE, direct comparisons with existing literature are not possible.

The analysis of incorrect responses revealed the trend of lower performance in the Basic knowledge of veterinary medical practice and the Clinical veterinary medicine areas compared to the other areas. The area of Basic knowledge of veterinary medical practice includes questions on veterinary laws, which likely contributed to the lower performance in this area. The questions regarding Japanese legal topics were particularly challenging for GPT24, as these questions often rely on the country-specific legal systems and frameworks, which are likely underrepresented in its predominantly English-language training dataset29. The Clinical veterinary medicine area was also challenging for GPT, as it requires not only factual knowledge but also the ability to integrate information from multiple sources and to perform multistep reasoning, both of which remain limited in current GPT models34. Thus, although GPT has demonstrated marked improvements in logical reasoning, the accurate resolution of such complex tasks would continue to pose a considerable challenge19,27,28.

It should be noted that OpenAI explicitly prohibits the direct use of GPT for medical or veterinary diagnosis and treatment decision-making35. Even when GPT is not directly used for such purposes, particular caution is warranted, as GPT may generate fabricated information (so-called hallucinations)36 and functions as an opaque black-box system30. Moreover, we caution that the medical benchmark scores do not directly reflect real-world readiness37. Therefore, realistic applications in clinical practice are limited to supportive roles, such as diagnostic assistance, risk detection, automatic summarization of medical records, and format conversion38. Moreover, GPT could also be effectively utilized in educational settings5, for example, as a virtual tutor for veterinary students. The demonstrated performance of GPT in this study indicates its reliable ability to assist in Japanese veterinary medicine and education.

This study contains several limitations. First, the 74th (2023) NVLE, which was used in the model comparison tests and the prompt and language comparison tests, was publicly available (March 2023) before the knowledge cutoffs of GPT-4o, o1, and o3 (October 2023, October 2023, and June 2024, respectively), therefore, we cannot completely exclude the possibility that the question sentences of the 74th NVLE were used in the training dataset of those models. Additionally, the 75th (2024) NVLE, which was used in the validation tests, was also publicly available (March 2024) before the knowledge cutoff of o3 (June 2024). Therefore, the results on the 74th and 75th NVLEs need to be interpreted with caution. The 76th (2025) NVLE was the only examination released after the knowledge cutoffs of GPT-4o, o1, and o3, and was therefore reserved exclusively for the validation tests. Because the 76th NVLE was unavailable for model training, the o3 model’s high performance is not solely attributable to data leakage and provides reliable evidence of the model’s innate ability. Therefore, since our claims are grounded in the 76th NVLE results, our study’s conclusions are valid and technically sound. Second, the temperature was fixed at 1.0 for this study because the temperature for o1 and o3 models cannot be modified from their default value of 1.0, and also set the temperature for GPT-4o to 1.0 in order to standardize the experimental conditions. In performance tests that primarily evaluate model knowledge, using a temperature of 0 or a low value is preferable, because a low temperature produces more deterministic outputs. It should also be noted that temperature may interact with sampling strategies such as majority voting, potentially influencing the stability or the accuracy. Although this interaction was not systematically evaluated in this study, it may be a relevant factor for future investigations. Finally, the performance of LLM is highly time-sensitive, as the models are frequently updated and their architectures and training data are not publicly disclosed. Therefore, the results of this study reflect the performance of the evaluated models at the time of experiment and may not fully generalize to future models. Future work could also explore differences in performance of state-of-the-art models, other architectures such as retrieval-augmented generation, and prompt generation methods such as automated prompt engineering.

In conclusion, o3 achieved high performance well above the minimum passing scoring rate across all sections using Japanese text input and the normal prompt. Notably, this performance was achieved without the need for translation or advanced prompt engineering, reflecting the substantial improvements in GPT’s inherent capabilities. These findings suggest that GPT’s evolution opens new possibilities for knowledge support in veterinary medicine.

link

/https://i.s3.glbimg.com/v1/AUTH_63b422c2caee4269b8b34177e8876b93/internal_photos/bs/2026/4/u/0e7IH5TTOUfF9hDAnG0A/foto21bra-101-inep-a2.jpg)

![Locum tenens offers physicians a path to freedom [PODCAST] Locum tenens offers physicians a path to freedom [PODCAST]](https://kevinmd.com/wp-content/uploads/Design-4-scaled.jpg)