Performance of ChatGPT-3.5 and GPT-4 in national licensing examinations for medicine, pharmacy, dentistry, and nursing: a systematic review and meta-analysis | BMC Medical Education

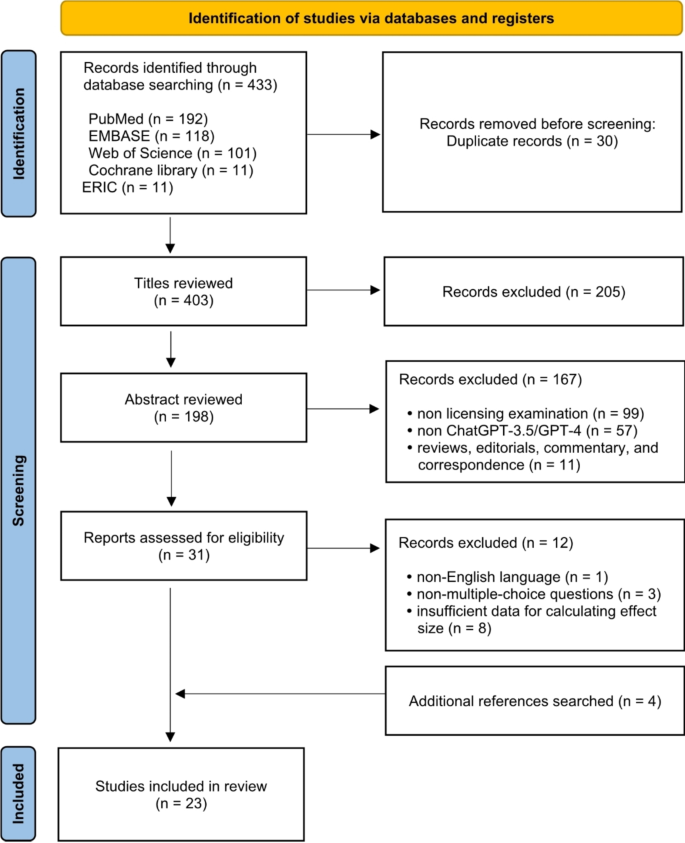

Study selection

The literature search yielded 433 articles after the initial search. After removing duplicates and performing title/abstract screening, a total of 31 articles were included for full-text review. Of these, one was excluded as it was reported in a language other than English, three for non-multiple-choice questions, and eight for insufficient data for calculating effect size. After assessing the articles for eligibility, 23 articles were included in this review (Fig. 1).

PRISMA flowchart of included studies

Risk of bias assessment

The findings of the risk of bias assessment using The Joanna Briggs Institute (JBI) Critical Appraisal Checklist for analytical cross-sectional studies are presented in Table 1. Among the studies, two were deemed to be at high risk [33, 34], and 18 at low risk [11, 13, 21, 27, 29,30,31, 35,36,37,38,39,40,41,42,43,44,45] with the most commonly observed weaknesses relating to the identification of confounding factors as well as the strategies to deal with them. The risk of bias for each of the other studies was considered to be moderate [14, 28, 32].

Study characteristics

The characteristics of the 23 included studies [11, 13, 14, 21, 27,28,29,30,31,32,33,34,35,36,37,38,39,40,41,42,43,44,45] are shown in Table 2. These studies were all published between 2022 and 2024. Five of the included studies were conducted in the United States [11, 13, 14, 28, 37], one in the UK [36], six in Japan [33, 35, 39,40,41, 45], four in China [29, 42,43,44], two in Taiwan [21, 32], and one each in Australia [34], Belgium [38], Peru [30], Switzerland [31], and Saudi Arabia [27]. In terms of field, 17 articles focused on medicine [11, 13, 14, 27, 29, 30, 33, 34, 36,37,38, 40,41,42,43,44,45], three on pharmacy [21, 28, 35], two on nursing [32, 39], and one on dentistry [31]. The range of questions per examination varied from 21 questions related to communication skills, ethics, empathy, and professionalism in the USMLE [14] to 1510 questions across four separate exams in 2022 and 2023 in the Registered Nurse License Exam [32].

The performance of ChatGPT-3.5 across these studies fluctuated drastically, with scores as low as 38% in the Japanese National Medical Licensure Examination [33] to as high as 73% in the Peruvian National Licensing Medical Examination [30]. Similarly, GPT-4’s performance varied but generally demonstrated superior accuracy, achieving from 64.4% in the Swiss Federal Licensing Examination in Dental Medicine [31] to 100% in USMLE questions related to soft skills assessments [14]. This consistent improvement across different exams and regions indicates GPT-4’s enhanced capability to handle diverse medical licensing examinations with higher accuracy compared to its predecessor.

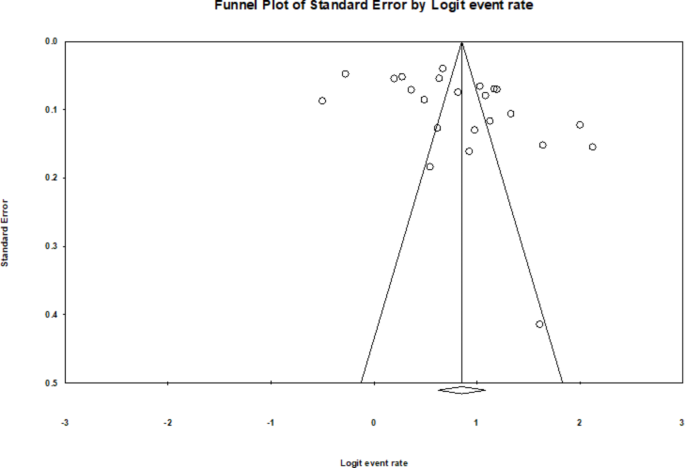

Publication bias

A funnel plot of the included studies revealed somewhat asymmetry (Fig. 2). Egger’s regression test resulted in a significant p-value (p = 0.028), suggesting the presence of publication bias in the meta-analysis. Conversely, the trim-and-fill analysis revealed that no studies were omitted or required trimming, indicating that the overall effect size remained unchanged. Additionally, Rosenthal’s fail-safe number, calculated in CMA as 310, suggests the absence of publication bias when compared to the Eq. 5n + 10 [51], where n represents the number of studies included in the meta-analysis. Taken together, we believe that publication bias does not appear likely in the study.

Funnel plot for studies included in the meta-analysis

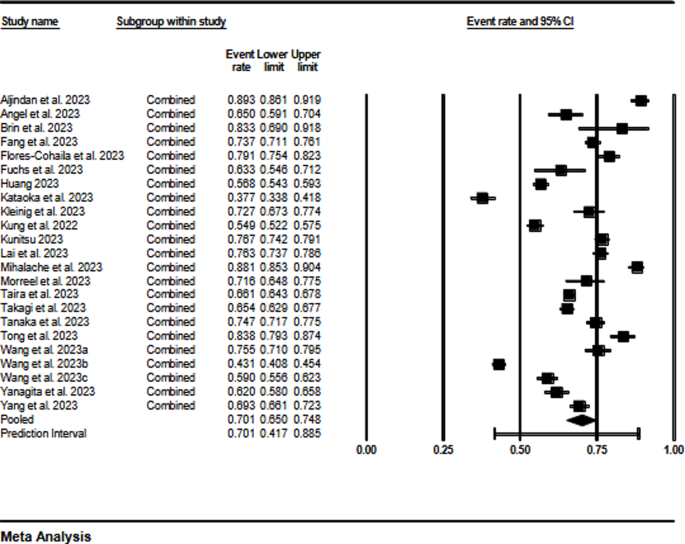

Overall analysis

A random-effects model was selected based on the assumption that effect sizes might vary among studies due to variations in examinations. Consequently, the effect sizes in the primary studies were also heterogeneous (Q = 1201.303, df = 22, p < 0.001), with an I2 of 98.2%. The overall effect size for the percentage of performance of ChatGPT models was 70.1% (95% CI, 65.0-74.8%). The forest plot of individual and overall effect sizes is shown in Fig. 3.

Forest plot of effect sizes using random effect model

Subgroup analyses

Under a random-effects model, subgroup analyses were conducted with the two categorical variables: (1) the type of ChatGPT model (ChatGPT-3.5 vs. GPT-4); and (2) the field of health licensing examinations (medicine, pharmacy, dentistry, and nursing), as detailed in Table 3. Other potential moderating factors were limited, as they were either too infrequently reported or inadequately described to facilitate a comprehensive subgroup analysis.

ChatGPT models

The effect size for the percentage of performance with regard to the ChatGPT models was 58.9% (95% CI, 56.2-61.6%) for ChatGPT-3.5 and 80.4% (95% CI, 78.6-82.0%) for GPT-4. The test results demonstrated a significant difference between the effect sizes of the studies (Q = 177.027, p < 0.001).

Fields of health licensing examinations

The effect size for percentage of performance was at an average rate of 71.5% (95% CI, 66.3-76.2%) in pharmacy, 69.7% (95% CI, 65.9-73.2%) in medicine, 63.2% (95% CI, 54.6-71.1%) in dentistry, and 61.8% (95% CI, 58.7-64.9%) in nursing. This indicated a significant difference between the fields (Q = 15.334, p = 0.002).

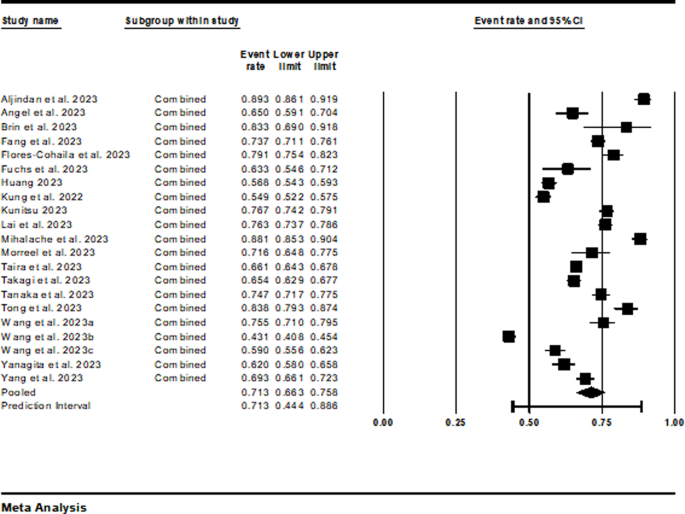

Sensitivity analysis

The sensitivity analysis demonstrated that removing high-risk studies did not significantly alter the overall accuracy, which remained at 71.3% (95% CI, 66.3-75.8%), indicating that the results were robust (p < 0.001), with an I² of 98.1% (Fig. 4).

Sensitivity analysis omitting high risk studies

link

/https://i.s3.glbimg.com/v1/AUTH_63b422c2caee4269b8b34177e8876b93/internal_photos/bs/2026/4/u/0e7IH5TTOUfF9hDAnG0A/foto21bra-101-inep-a2.jpg)

![Locum tenens offers physicians a path to freedom [PODCAST] Locum tenens offers physicians a path to freedom [PODCAST]](https://kevinmd.com/wp-content/uploads/Design-4-scaled.jpg)